Nvidia had its big GPU Technology Conference in San Jose this week. As the third largest company in the world (by market capitalization value), Nvidia’s profile has risen dramatically over the past couple years, primarily due to its position in the data center and AI processing worlds. As such, its flagship conference becomes less “graphics-related” every year as its products push more into those other spaces.

This year had very few media and entertainment-focused sessions, and even those were very AI-centric. Generative AI is where graphics and AI meet, and some amazing work is being done in that area, but I would still classify much of that as “art” as opposed to “storytelling” because the tools have not yet matured enough to give users enough control over the process to tightly steer the end result. My friend just won an AI film festival, but optimizing for the available tools steered the workflow more than the story did.

This year had very few media and entertainment-focused sessions, and even those were very AI-centric. Generative AI is where graphics and AI meet, and some amazing work is being done in that area, but I would still classify much of that as “art” as opposed to “storytelling” because the tools have not yet matured enough to give users enough control over the process to tightly steer the end result. My friend just won an AI film festival, but optimizing for the available tools steered the workflow more than the story did.

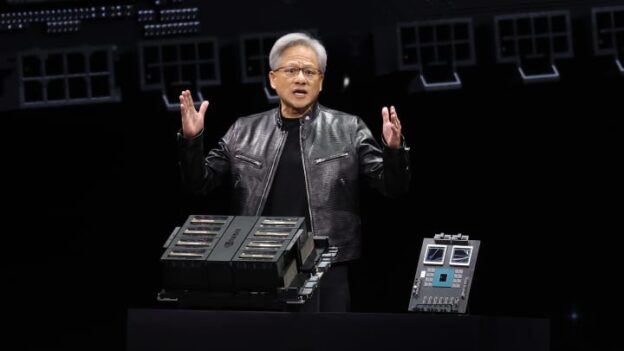

Events like GTC are how those tools get improved, but this conference is more for the people developing those tools, not the people using them. It is good to know what is coming, but there is still a lag time between when new technologies are announced and when they are ready to be used in the marketplace. And this was the first GTC to occur in person in San Jose since the pandemic moved everything online in 2020. Jensen Huang gave the keynote presentation at SAP Center in San Jose, which is much larger than the venues of previous keynotes I’ve attended in the past.

The main focus of his presentation was the new Blackwell architecture for supercomputers. There was no mention of putting that technology into a PCIe card, although it is my understanding that that’s what will be coming next. The presentation hardly even referenced the SMX socket version for existing GPU computers. Instead, Huang primarily focused on the GB200 Grace Blackwell NVL platform, which combines two Blackwell dual-die GPUs with a single existing Grace CPU with 72 Arm cores. The entire board has nearly 1TB of memory and 16TB of memory bandwidth.

The new DGX cluster, GB200 NVL72, has 72 Blackwell GPUs across 18 NVLink-connected nodes, with two boards in each node and four 800Gb NICs in a water-cooled MGX enclosure with a dedicated switch. That rack offers 720 petaflops of FP8 computing power at 120 kilowatts of power usage. That is 10 petaflops per GPU, which is 2.5x more than the previous-generation Hopper chips and far more than Ada. (Nvidia pointed out that the first DGX had 0.017 teraflops six years ago, so this is a 4000x improvement for AI processing via Tensor Cores.)

The new DGX cluster, GB200 NVL72, has 72 Blackwell GPUs across 18 NVLink-connected nodes, with two boards in each node and four 800Gb NICs in a water-cooled MGX enclosure with a dedicated switch. That rack offers 720 petaflops of FP8 computing power at 120 kilowatts of power usage. That is 10 petaflops per GPU, which is 2.5x more than the previous-generation Hopper chips and far more than Ada. (Nvidia pointed out that the first DGX had 0.017 teraflops six years ago, so this is a 4000x improvement for AI processing via Tensor Cores.)

It is difficult to accurately compute the differences between the Blackwell chips and existing GPUs because they are no longer benchmarking with the same measurements, as AI uses FP8 and now FP4, which exaggerates the performance numbers compared to traditional FP32-bit computations in graphics. Best I can tell, Ada PCIe GPUs peak at 600 teraflops of FP32 Tensor processing, while, if the patterns follow the usual formula, this new Blackwell chip should be able to do 2,500 teraflops at FP32 precision — so, a 4x improvement. The calculations are further complicated by the transition from teraflops (trillion) to petaflops (quadrillion), but suffice it to say that the new chips have gotten really fast as technology has advanced. I would predict that a new PCIe card based on this architecture should have two to three times the overall performance of current Ada-based GPUs. But based on the previous Hopper and Ada Lovelace architecture branching, Blackwell might not become the basis for a new GPU at all, and we might be waiting for a separate development there.

On the software side, the biggest news was the idea of Nvidia Inference Microservices, or NIMs, which would be prepackaged and trained AI software tools that could be easily deployed and combined to better use all of this new computing hardware. Nvidia also has tools for streaming Omniverse cloud data directly to Apple’s new Vision Pro headsets, which should add value to both products.

I also watched a session where a panel from the Vegas Sphere team discussed the workflow that they use to create content for that venue. They are moving massive amounts of data around to drive a 16Kx16K display with uncompressed 12-bit imagery, some of which is generated in real time. The team uses Nvidia both for the 100GbE networking to transport SMPTE ST 2110 streams and for the graphics processing.

Nvidia has also released some other new products over the past few months that might be more immediately relevant to users in the M&E space. The RTX 2000 Ada is a lower-end Ada-generation PCIe-based GPU that fits in a half-height, half-length, dual-slot space, with a PCIe x8 4.0 connector for 1U and 2U rackmount systems or other small-form-factor cases. With 16GB RAM and 12 teraflops of performance, the RTX 2000 Ada is 50% faster than the previous Ampere RTX 2000 and on par with the original Turing-based RTX 5000. It is probably enough GPU power for most editors, but not for 3D artists.

There are also new lower-end Ada-based mobile GPUs in the RTX 500 and RTX 1000 range for laptops. Low-end GPUs didn’t used to get my attention, but as processors have gotten more powerful, a lower-end GPU is enough for many users, and the options are worth exploring. With 2K CUDA cores and 9 teraflops of performance, even the lowest RTX 500 has respectable performance. People still editing in HD, or even UHD, who aren’t using HDR or floating point processing might be well-served by a basic RTX GPU that still has hardware acceleration for codecs and dedicated video memory without thousands of CUDA cores. It’s a cheaper solution that meets their needs while offering lighter-weight systems with longer battery life.

There are also new lower-end Ada-based mobile GPUs in the RTX 500 and RTX 1000 range for laptops. Low-end GPUs didn’t used to get my attention, but as processors have gotten more powerful, a lower-end GPU is enough for many users, and the options are worth exploring. With 2K CUDA cores and 9 teraflops of performance, even the lowest RTX 500 has respectable performance. People still editing in HD, or even UHD, who aren’t using HDR or floating point processing might be well-served by a basic RTX GPU that still has hardware acceleration for codecs and dedicated video memory without thousands of CUDA cores. It’s a cheaper solution that meets their needs while offering lighter-weight systems with longer battery life.

On the software release front, Nvidia has revamped the control centers for both the GeForce Experience and the more professionally focused Quadro Experience into an entirely new, unified Nvidia App. It is a totally new UI, and significantly, it no longer requires an online account and login to use basic features of the card. Having tried it, I think it is a welcome improvement over the previous solution.

Nvidia also recently released Chat for RTX, which is a large language module GPT-type chat program that runs entirely on local RTX GPUs. You need a sufficient source of data to train it on, but Chat for RTX might be a way for people interested in AI to begin experimenting with creating their own models instead of just using cloud-based options that other people have trained. There is a lot to this AI transition, and Nvidia and its products are at the center of the process.

Nvidia’s GTC 2024: Generative AI — Where Graphics and AI Meet