Stay tuned throughout the week for coverage from Open Source AI Week in San Francisco.

NVIDIA’s on the ground at Open Source AI Week. Stay tuned for a celebration highlighting the spirit of innovation, collaboration and community that drives open-source AI forward. Follow NVIDIA AI Developer on social channels for additional news and insights.

Andrej Karpathy’s Nanochat Teaches Developers How to Train LLMs in Four Hours 🔗

Computer scientist Andrej Karpathy recently introduced Nanochat, calling it “the best ChatGPT that $100 can buy.” Nanochat is an open-source, full-stack large language model (LLM) implementation built for transparency and experimentation. In about 8,000 lines of minimal, dependency-light code, Nanochat runs the entire LLM pipeline — from tokenization and pretraining to fine-tuning, inference and chat — all through a simple web user interface.

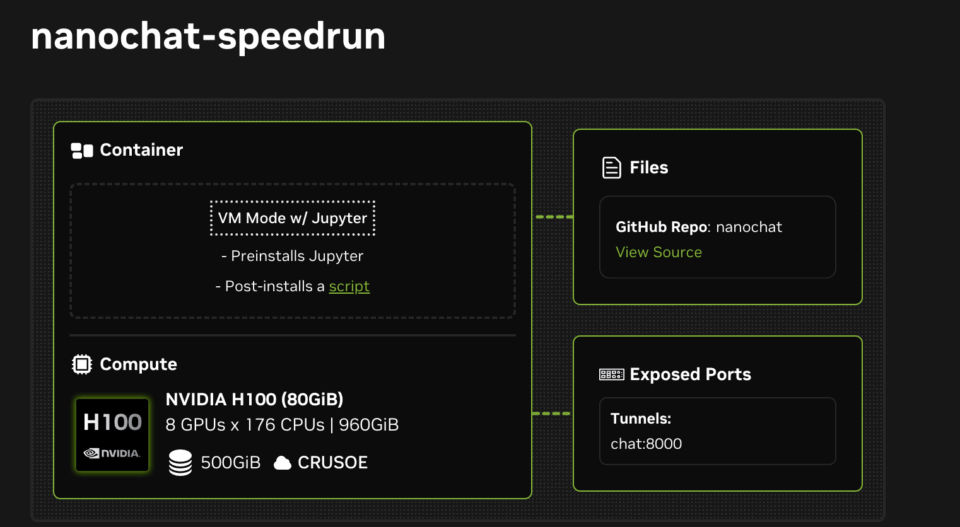

NVIDIA is supporting Karpathy’s open-source Nanochat project by releasing two NVIDIA Launchables, making it easy to deploy and experiment with Nanochat across various NVIDIA GPUs.

With NVIDIA Launchables, developers can train and interact with their own conversational model in hours with a single click. The Launchables dynamically support different-sized GPUs — including NVIDIA H100 and L40S GPUs — on various clouds without need for modification. They also automatically work on any eight-GPU instance on NVIDIA Brev, so developers can get compute access immediately.

The first 10 users to deploy these Launchables will also receive free compute access to NVIDIA H100 or L40S GPUs.

Start training with Nanochat by deploying a Launchable:

Andrej Karpathy’s Next Experiments Begin With NVIDIA DGX Spark

Today, Karpathy received an NVIDIA DGX Spark — the world’s smallest AI supercomputer, designed to bring the power of Blackwell right to a developer’s desktop. With up to a petaflop of AI processing power and 128GB of unified memory in a compact form factor, DGX Spark empowers innovators like Karpathy to experiment, fine-tune and run massive models locally.

Building the Future of AI With PyTorch and NVIDIA 🔗

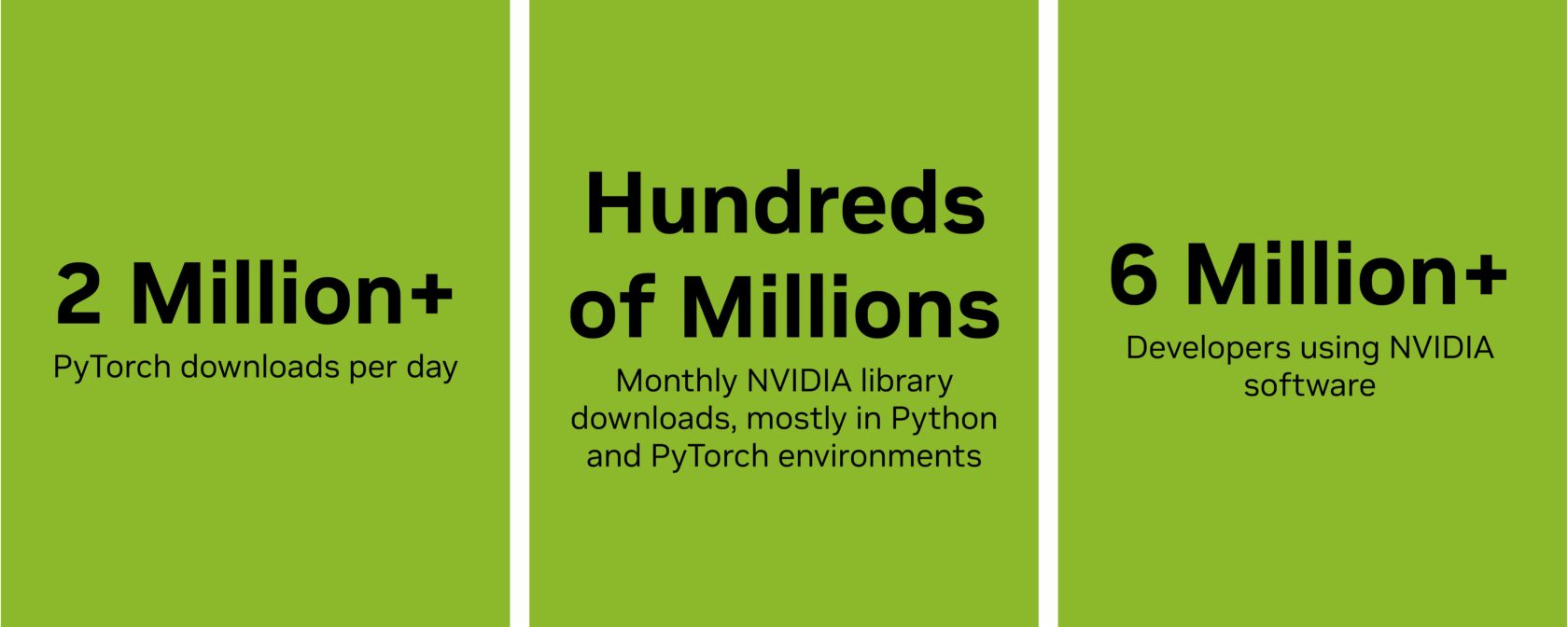

PyTorch, the fastest-growing AI framework, derives its performance from the NVIDIA CUDA platform and uses the Python programming language to unlock developer productivity. This year, NVIDIA added Python as a first-class language to the CUDA platform, giving the PyTorch developer community greater access to CUDA.

CUDA Python includes key components that make GPU acceleration in Python easier than ever, with built-in support for kernel fusion, extension module integration and simplified packaging for fast deployment.

Following PyTorch’s open collaboration model, CUDA Python is available on GitHub and PyPI.

Every month, developers worldwide download hundreds of millions of NVIDIA libraries — including CUDA, cuDNN, cuBLAS and CUTLASS — mostly within Python and PyTorch environments. CUDA Python provides nvmath-python, a new library that acts as the bridge between Python code and these highly optimized GPU libraries.

Plus, kernel enhancements and support for next-generation frameworks make NVIDIA accelerated computing more efficient, adaptable and widely accessible.

NVIDIA maintains a long-standing collaboration with the PyTorch community through open-source contributions and technical leadership, as well as by sponsoring and participating in community events and activations.

At PyTorch Conference 2025 in San Francisco, NVIDIA will host a keynote address, five technical sessions and nine poster presentations.

NVIDIA’s on the ground at Open Source AI Week. Stay tuned for a celebration highlighting the spirit of innovation, collaboration and community that drives open-source AI forward. Follow NVIDIA AI Developer on social channels for additional news and insights.

NVIDIA Spotlights Open Source Innovation 🔗

Open Source AI Week kicks off on Monday with a series of hackathons, workshops and meetups spotlighting the latest advances in AI, machine learning and open-source innovation.

The event brings together leading organizations, researchers and open-source communities to share knowledge, collaborate on tools and explore how openness accelerates AI development.

NVIDIA continues to expand access to advanced AI innovation by providing open-source tools, models and datasets designed to empower developers. With more than 1,000 open-source tools on NVIDIA GitHub repositories and over 500 models and 100 datasets on the NVIDIA Hugging Face collections, NVIDIA is accelerating the pace of open, collaborative AI development.

Over the past year, NVIDIA has become the top contributor in Hugging Face repositories, reflecting a deep commitment to sharing models, frameworks and research that empower the community.

Openly available models, tools and datasets are essential to driving innovation and progress. By empowering anyone to use, modify and share technology, it fosters transparency and accelerates discovery, fueling breakthroughs that benefit both industry and communities alike. That’s why NVIDIA is committed to supporting the open source ecosystem.

We’re on the ground all week — stay tuned for a celebration highlighting the spirit of innovation, collaboration and community that drives open-source AI forward, with the PyTorch Conference serving as the flagship event.