$2 billion here, $2 billion there, $2 billion everywhere and pretty soon it starts adding up to real money. But with the current costs of a rack of Grace-Blackwell compute and the expected – and much higher – cost of a rack of future Vera-Rubin compute, the cash coming in is adding up to the cash being invested in the chippery ecosystem by AI industry juggernaut Nvidia.

Our best guess is that Nvidia will bring somewhere between $150 billion and $160 billion to the bottom line in its fiscal 2027 year that ends next January, and Big Green can do a lot of $2 billion investments to help seed the kinds of chips it wants the market to make in volume and at the same time secure alliances – sometimes with competitors – that will help its own platform proliferate in what could very well be a less homogeneous GenAI future.

Chip maker Marvell, which like Nvidia has essentially become an AI datacenter company, is the latest to get a $2 billion investment from Big Green. The investment is being made to help Marvell ramp up various technologies into volume production, and is being made concurrent with (but is apparently not dependent on) a strategic partnership to allow some mixing and matching of various technologies from both companies for customers to use to build their AI systems.

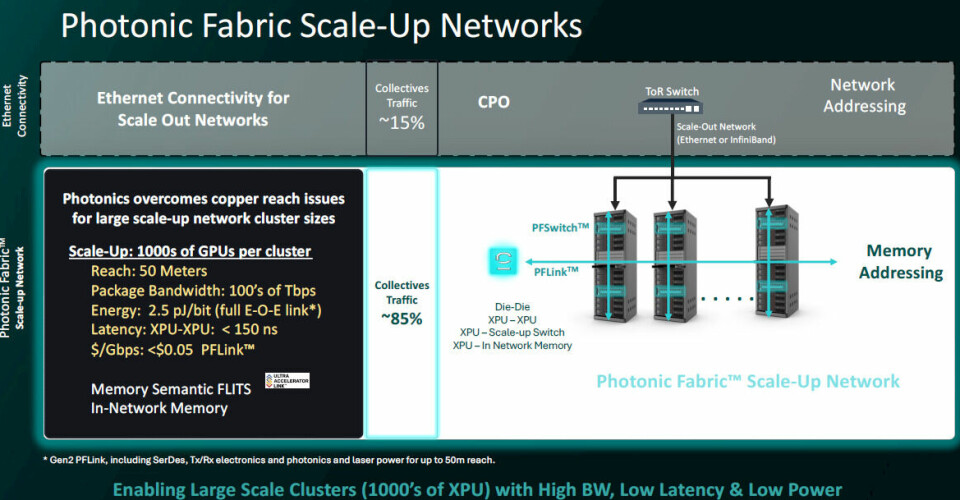

The $2 billion investment in Marvell mirrors the pair of $2 billion investments that Nvidia made in Lumentum and Coherent as March was getting started. In the case of those two deals, Nvidia is making sure Lumentum and Coherent can ramp up production of lasers used in co-packaged optics (CPO) components as Nvidia is adding this technology to its Quantum-X InfiniBand and Spectrum-X Ethernet scale out switches, which are used to lash GPU-accelerated systems together into supercomputing clusters. (This is in contrast to scale up networks, which are used to provide coherent memory across CPUs, GPUs, and XPUs in a server node or a rackscale system using lower latency and higher bandwidth switches and ports.) We think that both Lumentum and Coherent are interesting to Nvidia’s potential future in that both have created optical circuit switches akin to the ones that Google has used for more than a decade as the backbone of its network and, more recently, as the spine in the coherent memory networks for its TPU clusters.

Marvell is interesting for other reasons, and ones beyond the obvious ones stated in the announcement by the two companies.

The deal explicitly calls for Marvell to support Nvidia’s licensable NVLink Fusion ports, and to be super precise, says Marvell “will provide custom XPUs and NVLink Fusion-compatible scale-up networking.” That does not necessarily mean that Marvell will support Nvidia’s NVSwitch switches, and the wording sure does sound like Marvell will be able to support the NVLink protocol on the UALink and/or PCI-Express 6.0 switches; the company has just revealed the Structera S 60260, which supports 260 lanes of PCI-Express and probably around 2.1 TB/sec of aggregate bandwidth. This looks like an upgrade of an existing PCI-Express 5.0 product that was created by XConn, which Marvell acquired in January for $540 million. The current NVSwitch 4 and 5 ASICs from Nvidia deliver 1.8 TB/sec of bandwidth per port and have 7.2 TB/sec of aggregate bandwidth. So maybe Marvell is also getting access to NVSwitch chippery to hook into the AI systems that it is helping build.

Considering that Amazon Web Services is the biggest custom AI chip customer that Marvell has, and that AWS already has an NVLink Fusion partnership with Nvidia, and that AWS has said that the future Trainium 4 XPU will support both UALink and NVLink protocols, it stands to reason that the main partner that AWS has for shepherding its Trainium chips from design to packaging – that would be Marvell – would also need access to Nvidia’s technology.

The question that we have is who is licensing the NVLink protocol, and under what conditions are customers allowed to use the protocol in custom AI clusters that might have homegrown CPUs and XPUs? No one has really talked about this in the deals we have seen, but clearly if you are buying NVLink hardware, you probably want rights to NVLink software, too. But, maybe these companies just want fat and fast pipes and they plant to figure out the protocols for themselves. It could be both. The hyperscalers and cloud builders are like that. Especially the clouds, which need to have Nvidia and AMD systems to sell capacity on their clouds because this is what most customers expect to use but who also want to create their own compute engines (and soon interconnects) to allow them to drive down costs for internal facing applications or those they provide as a service.

Under the partnership, Nvidia says that it will be supplying supporting technologies for custom XPUs with NVLink Fusion ports, including Vera CPUs, Groq LPUs, ConnectX NICs, Bluefield DPUs, NVLink interconnects, and Spectrum-X switches.

We wonder if the Nvidia deal will see Big Green take a look at the photonic fabric created by Celestial AI, which Marvell acquired in December 2025 for $3.25 billion. This photonic fabric allows for row-scale coherent memory and has in-network collectives processing just like Nvidia has offered in its past several generations of NVSwitch chips, compliments of the InfiniBand technology that Nvidia got with its $6.9 billion acquisition of Mellanox Technologies way back in April 2020.

It could turn out, as we have said, that under the strategic partnership that Marvell (and therefore AWS and any future other XPU or CPU customers) are getting the right to run NVLink protocols atop the Photonic Fabric (which is the technical and trademarked name of the Celestial product) that Marvell has.

We also wonder how long before there will be a partnership between Nvidia and Broadcom, which is a rival with Nvidia in scale out networks but a supplier of all kinds of things, including the VMware ESX Server hypervisor. Earlier this month, I mused about how Broadcom might be the only sizable counterbalance to the hegemony of Nvidia right now, considering its dominant position in Ethernet switch ASICs and its fast-growing custom XPU business.

Broadcom makes Google’s TPUs and now also TPU racks for Anthropic, and is the manufacturer of the MTIA XPUs from Meta Platforms as well. The rumor is that ByteDance and Apple are two other big XPU customers, and there is now a sixth, with OpenAI using Broadcom to shepherd its “Titan” XPU to market.

It is unclear if any of these companies want NVLink Fusion ports on their devices. But if they do, Nvidia and Broadcom will bury the hatchet and cut a deal. That deal will no doubt include cross-coupling of technologies, much as we think is happening in the Marvell deal.

https://www.nextplatform.com/connect/2026/03/31/the-2-billion-nvidia-deal-with-marvell-is-about-a-lot-more-than-nvlink-fusion/5213790