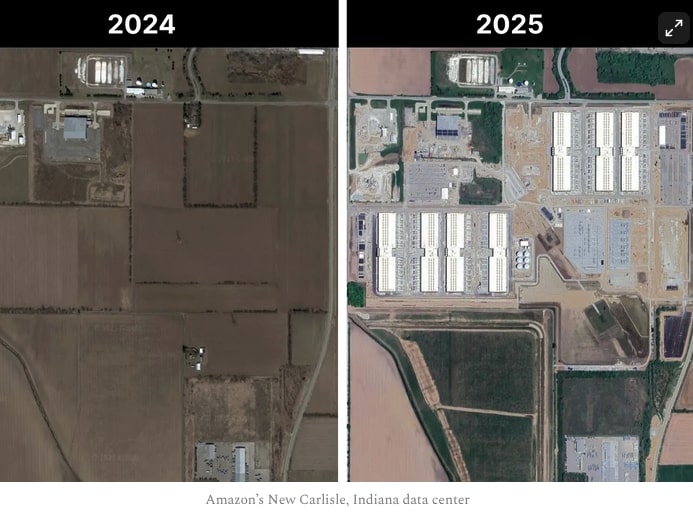

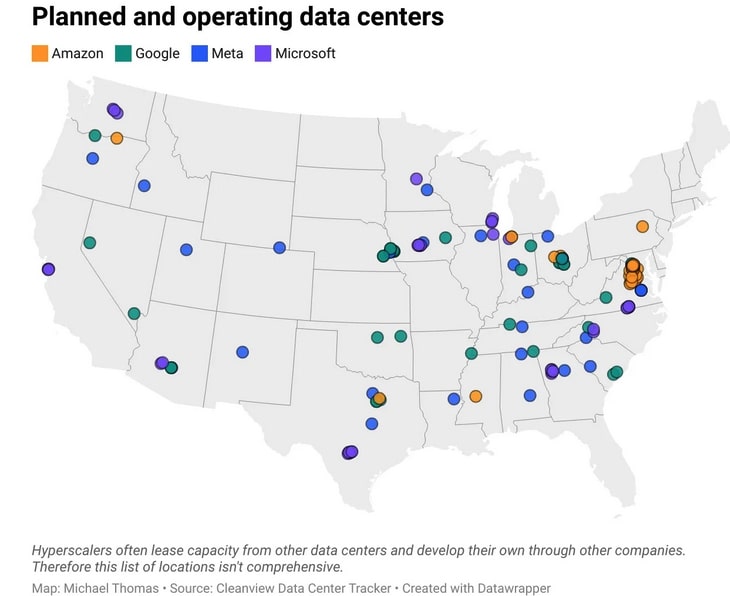

The US and its hyperscalers are spending more money on AI and AI data centers. The US hyperscalers have huge capacity for $400 billion on capital expenditure and hundreds of billion on research. The US hyperscalers have more free cashflow to expand AI investments towards $1 trillion per year and beyond.

China is getting Nvidia H100 caliber chips made domestically. China is also working on HBM memory. These are great achievements but the US has Nvidia B200s that are about 5-10X better than H100s. There will be Nvidia Ruben chips coming out in the second half of 2026.

Being 100X behind in compute is about a 30-50% gap in AI performance on the leading edge. China is able to maintain itself as a close follower to the US leading edge of AI. China has most of the world’s top AI researchers.

In 2025, China’s total AI capital expenditure could reach up to $98 billion. The government is a leading driver of this investment, contributing an estimated $56 billion through various funds. Chinese tech giants are also investing heavily in AI infrastructure. In 2025, internet companies are projected to contribute up to $24 billion, with major firms like Alibaba and Tencent announcing multi-billion dollar investment plans. In 2023 and 2024, more than 500 new data center projects were announced across China, in places such as Inner Mongolia and Guangdong. These amounts still lag behind the USa.

In 2024, U.S. private AI investment grew to $109.1 billion—nearly 12 times China’s $9.3 billion and 24 times the U.K.’s $4.5 billion.

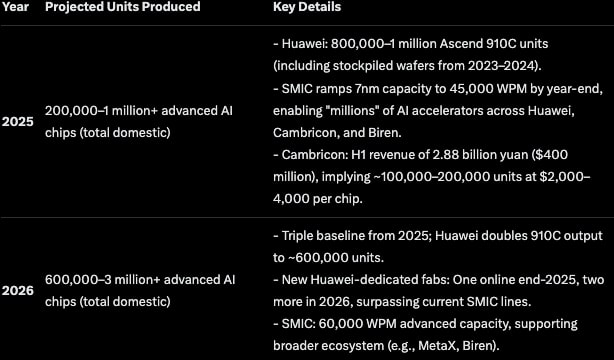

China is spending $50–70 billion in annual subsidies for AI chips and data centers via the Big Fund III. China is focused on 7nm nodes for AI training/inference. Domestic chips power ~30–40% of China’s AI compute by 2026, up from less than 10% in 2024. China semiconductor yields are ~70–80% versus 90%+ for TSMC. This inflates costs 20–50%. They have no access to 5nm+ without EUV tools.

There are lags in China’s fab construction and scaling. They are racing for advanced AI-relevant nodes (5–7nm) and are about 3–5 years behind leaders like TSMC. Volume expansions are on track with 18 new global fabs starting in 2025 and ~40% in China.

Cambricon Technologies reported H1 2025 revenue of 2.88 billion yuan. That’s a 4,347% year-over-year increase. Customers dependent on Nvidia suddenly needed alternatives. They found Cambricon’s Siyuan 370 chips offered acceptable performance for specific workloads at lower cost.

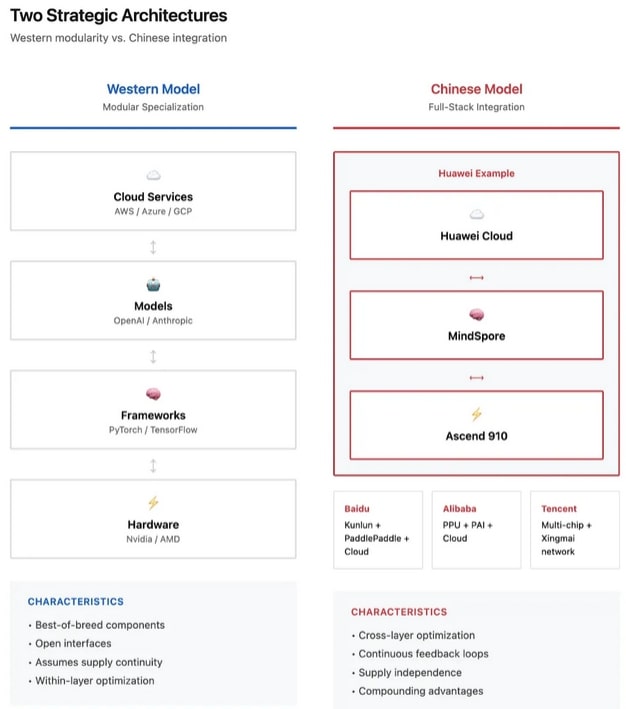

Baidu deployed a 30,000-card Kunlun P800 cluster. First Chinese company operating domestic AI accelerators at hyperscale for LLM training. More telling: Baidu’s Intelligent Cloud won a 1 billion yuan China Mobile contract for AI inference servers. Kunlun chips captured all three sub-packages in the CUDA-like ecosystem category.

September 2025 Alibaba’s new training chip, Codenamed PPU was spotted. It is using domestic 7nm process and 2.5D Chiplet packaging. Specifications reportedly matching H100 at ~40% lower costs. China’s tech giants committed 380 billion yuan to AI infrastructure. They are betting on domestic chips as long-term foundations, not stopgaps.

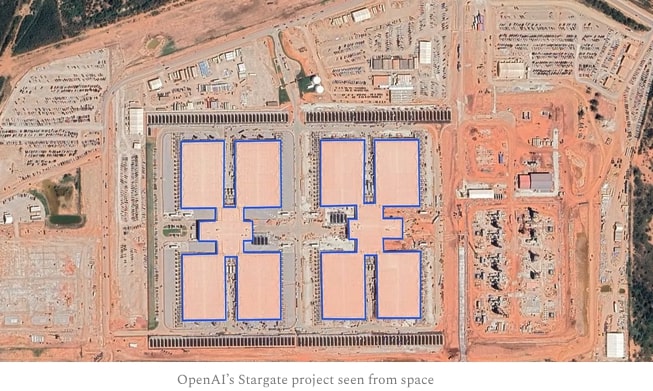

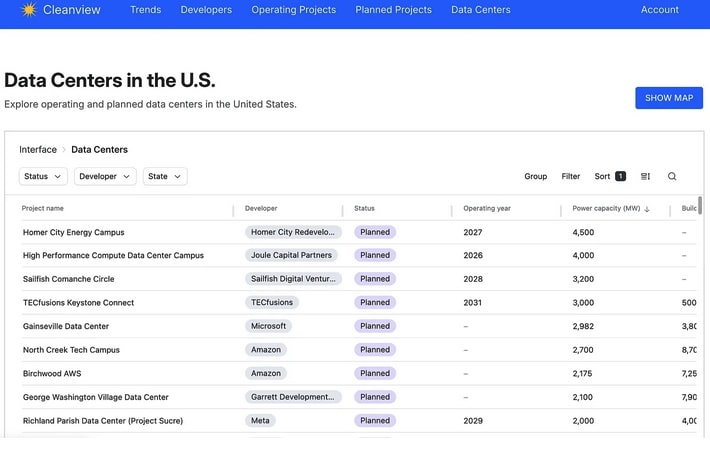

Cleanview has a new data center tracker. They are tracking about 550 planned data centers with a combined power capacity of 125 GW.