Merges 8th-Gen TPUs, NVIDIA Rubin, & Axion CPUs Together

Google has announced the AI Hypercomputer, which brings together TPUv8 series, NVIDIA Rubin, & Axion CPUs to power the Agentic AI era.

Google Cloud Next 26: AI Hypercomputer Announcement Gives Agentic AI The Next Push, Leverages In-House TPUs, CPUs & Scales Beyond With NVIDIA Rubin

Gone are the days of supercomputers; the Agentic AI era will be all about hypercomputers, which will combine various compute options to deliver customers the most flexible and performant AI architecture ever built.

Related Story Google Splits TPUv8 Strategy Into Two Chips, Handing Broadcom Training and MediaTek Inference Duties

A diagram titled ‘AI Hypercomputer’ features three sections: ‘Flexible Consumption’ with ‘Orchestration,’ ‘Cluster Management,’ and ‘Consumption Models’; ‘Open Software’ with ‘Frameworks’ and ‘Inference Engines’; and ‘Purpose-built Hardware’ with ‘Compute,’ ‘Storage,’ and ‘Networking.’

Today, at Google’s Cloud Next 26 event, the company formally announced its AI Hypercomputer. The new high-performance computing datacenter for Agentic AI houses an advanced, purpose-built architecture that unifies performance-optimized hardware for compute, storage, networking, open software, and ML frameworks.

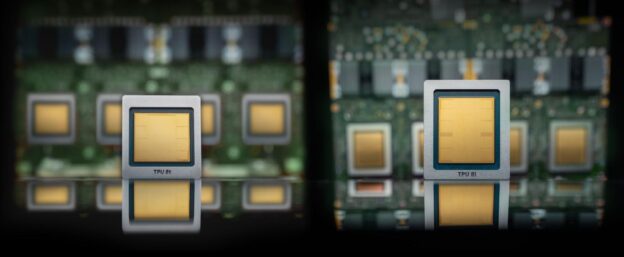

To make Google’s AI Hypercomputer possible, the company had to go above and beyond. It will house its latest custom TPUv8 series, Axion Cloud CPUs, and will also deploy NVIDIA Rubin GPUs. Today’s announcement also comes with the launch of Google’s 8th Gen TPU lineup, which comes in two flavors: the TPU 8t and the TPU 8i.

A close-up of a circuit board showing multiple ‘TPU v4’ chips with cooling components attached.

Google TPU 8t – Training Chip

The Google TPU 8t chip is designed as a training powerhouse, reducing the deployment of frontier models from months to weeks. The chip offers the highest possible compute throughput, shared memory, and interchip bandwidth in the most power-efficient package ever built. The TPU 8t chip has a total FP4 compute capacity of 121 Exaflops per pod, 2.84x higher than Ironwood.

A diagram labeled ‘TensorCore’ illustrating a hardware architecture with components such as ‘Host,’ ‘PCIe Gen5 x16,’ ‘Sparse core,’ ‘MXU,’ ‘VPU + Vmem,’ ‘HBM3E Ctrl,’ and ‘ICI,’ with color-coded keys for compute, memory, interconnect, and management features.

The key features include:

Massive scale: A single TPU 8t superpod now scales to 9,600 chips and two petabytes of shared high-bandwidth memory, with double the interchip bandwidth of the previous generation. This architecture delivers 121 ExaFlops of compute and allows the most complex models to leverage a single, massive pool of memory.

Maximum utilization: By also integrating 10x faster storage access, combined with TPUDirect to pull data directly into the TPU, TPU 8t helps ensure maximum utilization of the end-to-end system.

Near-linear scaling: Our new Virgo Network, combined with JAX and our Pathways software, means TPU 8t can provide near-linear scaling for up to a million chips in a single logical cluster.

Native FP4: TPU 8t introduces native 4-bit floating point (FP4) to overcome memory bandwidth bottlenecks, doubling MXU throughput while maintaining accuracy for large models even at lower-precision quantization. By reducing the bits per parameter, the platform minimizes energy-intensive data movement and allows larger model layers to fit within local hardware buffers for peak compute utilization.

Google TPU 8i – Inferencing Chip

The second chip, TPU 8i, is designed for inference and pairs an incredible 288 GB of HBM memory with 384 MB of on-chip SRAM, which is a 3x boost in capacities over the previous generation. With such a large SRAM, you can keep models active entirely on the chip. The TPU 8i chip has a total FP8 compute capacity of 331.8 Exaflops per pod, 6.74x higher than Ironwood.

A detailed architecture diagram shows a system with two TensorCores, each with VPU+Vmem, interfaced with HBM3 controllers, PCIe Gen5 x16, Gen2 x1, and multiple memory/DMA interconnects, along with an ICI router and 6x200G SerDes octals.

The salient features of TPU 8i include:

Axion-powered efficiency: We doubled the physical CPU hosts per server, moving to our custom Axion Arm-based CPUs. By using a non-uniform memory architecture (NUMA) for isolation, we have optimized the full system for superior performance.

Scaling MoE models: For modern Mixture of Experts (MoE) models, we doubled the Interconnect (ICI) bandwidth to 19.2 Tb/s. Our new Boardfly architecture reduces the maximum network diameter by more than 50%, ensuring the system works as one cohesive, low-latency unit.

Eliminating lag: Our new on-chip Collectives Acceleration Engine (CAE) offloads global operations, reducing on-chip latency by up to 5x, minimizing lag.

When it comes to generation over generation improvements, the TPU8t Training chip offers a 2.7x better performance per dollar improvement over Ironwood “TPUv7” in large-scale training, the TPU8i Inference chip offers a 80% performance per dollar improvement over Ironwood “TPUv7” in low-latency targets for MoE model. Both chips also deliver twice the performance per watt improvement, which is vital for AI TCO.

A high-performance liquid cooling system with labeled pipes and visible gauges in a data center.

Both chips support Google’s 4th Gen liquid cooling technology that is able to sustain the higher compute and performance densities, not possible with air cooling.

Feature TPU 8t TPU 8i

Primary Workload Large-scale pre-training Sampling, serving, and reasoning

Network Topology 3D torus Boardfly

Specialized Chip Features SparseCore (Embeddings) & LLM Decoder Engine CAE (Collectives Acceleration Engine)

HBM Capacity 216 GB 288 GB

On-Chip SRAM (Vmem) 128 MB 384 MB

Peak FP4 PFLOPs 12.6 10.1

HBM Bandwidth 6,528 GB/s 8,601 GB/s (~1.3x of TPU 8t)

CPU Header Arm Axion Arm Axion

Two chips labeled ‘TPU 8i’ and ‘TPU 8t’ are displayed against a blue background.

And with that, let’s round up the main highlights of the Google AI Hypercomputer, which are listed below:

TPU 8t, optimized for training, uses breakthrough Inter-Chip Interconnect (ICI) technology to scale up to 9,600 TPUs and 2 PB of shared, high-bandwidth memory in a single superpod. It achieves 3x the processing power of Ironwood and delivers up to 2x more performance/Watt.

TPU 8i, optimized for inference, uses our new Boardfly topology to directly connect 1,152 TPUs in a single pod. It features 3x more on-chip SRAM compared to previous versions to host larger KV caches entirely on-silicon and integrates a specialized Collectives Acceleration Engine. Taken together, TPU 8i delivers 80% better performance per dollar for inference than the prior generation.

NVIDIA GPUs are a core part of our AI accelerator portfolio. We will be among the first to offer NVIDIA Vera Rubin NVL72, in addition to the Blackwell- and Hopper-based instances already available.

Google Cloud Axion includes our N4A Axion instances—launched in January—which deliver 100% better price-performance than comparable x86 instances, ensuring sustained, efficient operation for agents.

Network-optimized Compute is expanding with new C4N and M4N machine series, perfectly optimized for high-volume agent communication, network-heavy Telco 5G core, and enterprise databases. C4N instances can deliver an almost 4x increase in network bandwidth per vCPU over standard C4 instances.

Innovations in Storage include improvements in Managed Lustre, which now delivers 10 TB per second of throughput to A5X or TPU 8t over RDMA, supercharging training. In Rapid Storage, we’ve made significant performance improvements—going from 6 TB/sec to 15 TB/sec—to speed up AI training and inference workloads. And we are introducing Smart Storage, which applies semantic meaning to unstructured data, providing the foundation for our Enterprise Knowledge Graph.

New capabilities in Networking include the Virgo Network, our new, purpose-built, AI-optimized network that connects either NVIDIA Vera Rubin NVL72 systems or TPU 8t superpods into massive supercomputers with hundreds of thousands of accelerators, supercharging distributed training of the world’s most capable frontier models.

Google Cloud will also be one of the first AI infrastructures to offer NVIDIA VR200 (Vera Rubin) accelerators. The Rubin GPUs will be paired with Google’s brand new Virgo network, offering massive-scale training clusters alongside Google’s own 8th Gen TPU family.

The Google AI hypercomputer will be used by several customers, including big names such as the US DOE, Boston Dynamics, Citadel Securities, Thinking Machine Labs, and Axia Energy.

https://wccftech.com/google-unveils-the-heart-of-agentic-ai-the-ai-hypercomputer-8th-gen-tpus-nvidia-rubin-axion-cpus/amp/