SAN JOSE, CALIF. The lights dimmed and 17,562 people drew breath. A sea of smartphones held above heads lit up across the home of San Jose’s ice hockey team, the Sharks.

A real-time, AI-generated digital sculpture began to fill the towering display behind the stage—a constantly swirling corpus of color-changing bubbles, suggesting barely perceptible landscapes, animals and natural forms, like a Rorschach test in vibrant technicolor.

Then the pre-game fanfare, which, as usual, began with promotional footage of how Nvidia is changing the world. Combined with the atmosphere in the stadium, clips of cutting-edge healthcare and climate change research on the big screen became surprisingly emotive.

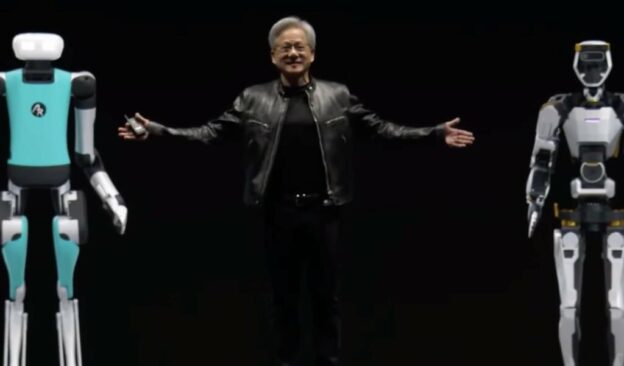

Then he takes the stage—trademark alligator-embossed black leather motorcycle jacket contrasting with white hair—to a barrage of smartphone flashes.

“I hope you realize this isn’t a rock concert—this is a developer conference,” he said, to a roar of laughter and applause from the crowd.

Welcome to Nvidia CEO Jensen Huang’s GTC Keynote, also known as one of the biggest AI hype vehicles in the industry.

Over two hours, Huang laid out numerous innovations and breakthroughs Nvidia made or enabled in the last year. He talked about how generative AI has infiltrated practically every industry—including chip design—but emphasized the months required to train the biggest trillion-parameter models.

“What we need is bigger GPUs,” he said.

No kidding.

Then the moment the stadium—and the world—had been waiting for, as Huang unveiled Nvidia’s new GPU platform: Blackwell. The centerpiece, B200, is Nvidia’s first GPU superchip—two reticle-sized die on a new custom process node that can offer 2.5× the FLOPS of the current state-of-the-art AI training chip, H100. The two die have a 10 TB/s link and are fully cache-coherent, so the dies behave like one big GPU. The result is as close as it is possible to get to breaking the reticle limit today.

While Blackwell is no doubt a triumph of engineering, even more significant gains have been realized at the system level. For generative AI, where communication is the bottleneck, Nvidia’s switch chips, NICs, and DPUs with 72 Blackwell GPUs and 36 Grace CPUs in a single rack can improve performance by 30× and power-performance by 25× for generative AI, versus Hopper.

“This system is kind of insane,” Huang said.

Insane seems about right—a single rack provides 1.4 exaFLOPS of AI compute (FP4/sparse), 13.5 TB of HBM and 30 TB of fast memory. Liquid cooling ejects 2 liters per second of hot water, which Huang suggested could “power a Jacuzzi.” In fact, this machine will likely power almost all of the world’s generative AI research, training, and deployments going forward, and a good portion of scientific computing, too.

Huang highlighted dozens of other Nvidia innovations over the two hours, including AI software, Omniverse and robotics, with no less zeal, even saying that the “ChatGPT moment for robotics is around the corner,” but the real focus, as always, was on generative AI.

Demand for training and inference of generative AI is exploding and Nvidia holds the lead practically unchallenged in terms of hardware deployments today. With the launch of Blackwell, every competitor product that was claiming to keep the pace with Hopper has been blown out of the water. B200, as the new H100, has reset the standard by which all other AI hardware is measured, and that standard just jumped 30× at the system level.

In other words: the gloves are off.

https://www.eetimes.com/huang-keynote-the-gloves-are-off/