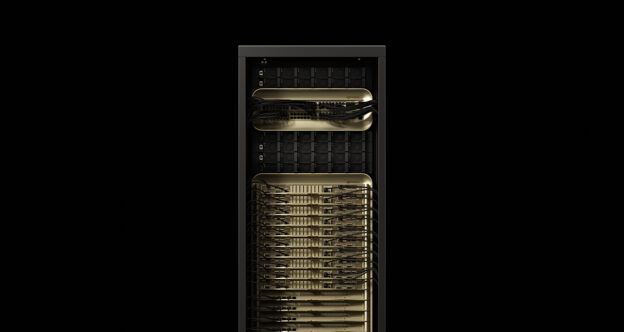

NVIDIA GB200 NVL72 system boosts AI factory profitability with outstanding throughput even as models get larger and more complex.

In the latest MLPerf Inference V5.0 benchmarks, which reflect some of the most challenging inference scenarios, the NVIDIA Blackwell platform set records — and marked NVIDIA’s first MLPerf submission using the NVIDIA GB200 NVL72 system, a rack-scale solution designed for AI reasoning.

Delivering on the promise of cutting-edge AI takes a new kind of compute infrastructure, called AI factories. Unlike traditional data centers, AI factories do more than store and process data — they manufacture intelligence at scale by transforming raw data into real-time insights. The goal for AI factories is simple: deliver accurate answers to queries quickly, at the lowest cost and to as many users as possible.

The complexity of pulling this off is significant and takes place behind the scenes. As AI models grow to billions and trillions of parameters to deliver smarter replies, the compute required to generate each token increases. This requirement reduces the number of tokens that an AI factory can generate and increases cost per token. Keeping inference throughput high and cost per token low requires rapid innovation across every layer of the technology stack, spanning silicon, network systems and software.

The latest updates to MLPerf Inference, a peer-reviewed industry benchmark of inference performance, include the addition of Llama 3.1 405B, one of the largest and most challenging-to-run open-weight models. The new Llama 2 70B Interactive benchmark features much stricter latency requirements compared with the original Llama 2 70B benchmark, better reflecting the constraints of production deployments in delivering the best possible user experiences.

In addition to the Blackwell platform, the NVIDIA Hopper platform demonstrated exceptional performance across the board, with performance increasing significantly over the last year on Llama 2 70B thanks to full-stack optimizations.

NVIDIA Blackwell Sets New Records

The GB200 NVL72 system — connecting 72 NVIDIA Blackwell GPUs to act as a single, massive GPU — delivered up to 30x higher throughput on the Llama 3.1 405B benchmark over the NVIDIA H200 NVL8 submission this round. This feat was achieved through more than triple the performance per GPU and a 9x larger NVIDIA NVLink interconnect domain.

While many companies run MLPerf benchmarks on their hardware to gauge performance, only NVIDIA and its partners submitted and published results on the Llama 3.1 405B benchmark.

Production inference deployments often have latency constraints on two key metrics. The first is time to first token (TTFT), or how long it takes for a user to begin seeing a response to a query given to a large language model. The second is time per output token (TPOT), or how quickly tokens are delivered to the user.

The new Llama 2 70B Interactive benchmark has a 5x shorter TPOT and 4.4x lower TTFT — modeling a more responsive user experience. On this test, NVIDIA’s submission using an NVIDIA DGX B200 system with eight Blackwell GPUs tripled performance over using eight NVIDIA H200 GPUs, setting a high bar for this more challenging version of the Llama 2 70B benchmark.

Combining the Blackwell architecture and its optimized software stack delivers new levels of inference performance, paving the way for AI factories to deliver higher intelligence, increased throughput and faster token rates.

NVIDIA Hopper AI Factory Value Continues Increasing

The NVIDIA Hopper architecture, introduced in 2022, powers many of today’s AI inference factories, and continues to power model training. Through ongoing software optimization, NVIDIA increases the throughput of Hopper-based AI factories, leading to greater value.

On the Llama 2 70B benchmark, first introduced a year ago in MLPerf Inference v4.0, H100 GPU throughput has increased by 1.5x. The H200 GPU, based on the same Hopper GPU architecture with larger and faster GPU memory, extends that increase to 1.6x.

Hopper also ran every benchmark, including the newly added Llama 3.1 405B, Llama 2 70B Interactive and graph neural network tests. This versatility means Hopper can run a wide range of workloads and keep pace as models and usage scenarios grow more challenging.

It Takes an Ecosystem

This MLPerf round, 15 partners submitted stellar results on the NVIDIA platform, including ASUS, Cisco, CoreWeave, Dell Technologies, Fujitsu, Giga Computing, Google Cloud, Hewlett Packard Enterprise, Lambda, Lenovo, Oracle Cloud Infrastructure, Quanta Cloud Technology, Supermicro, Sustainable Metal Cloud and VMware.

The breadth of submissions reflects the reach of the NVIDIA platform, which is available across all cloud service providers and server makers worldwide.

MLCommons’ work to continuously evolve the MLPerf Inference benchmark suite to keep pace with the latest AI developments and provide the ecosystem with rigorous, peer-reviewed performance data is vital to helping IT decision makers select optimal AI infrastructure.

Learn more about MLPerf.

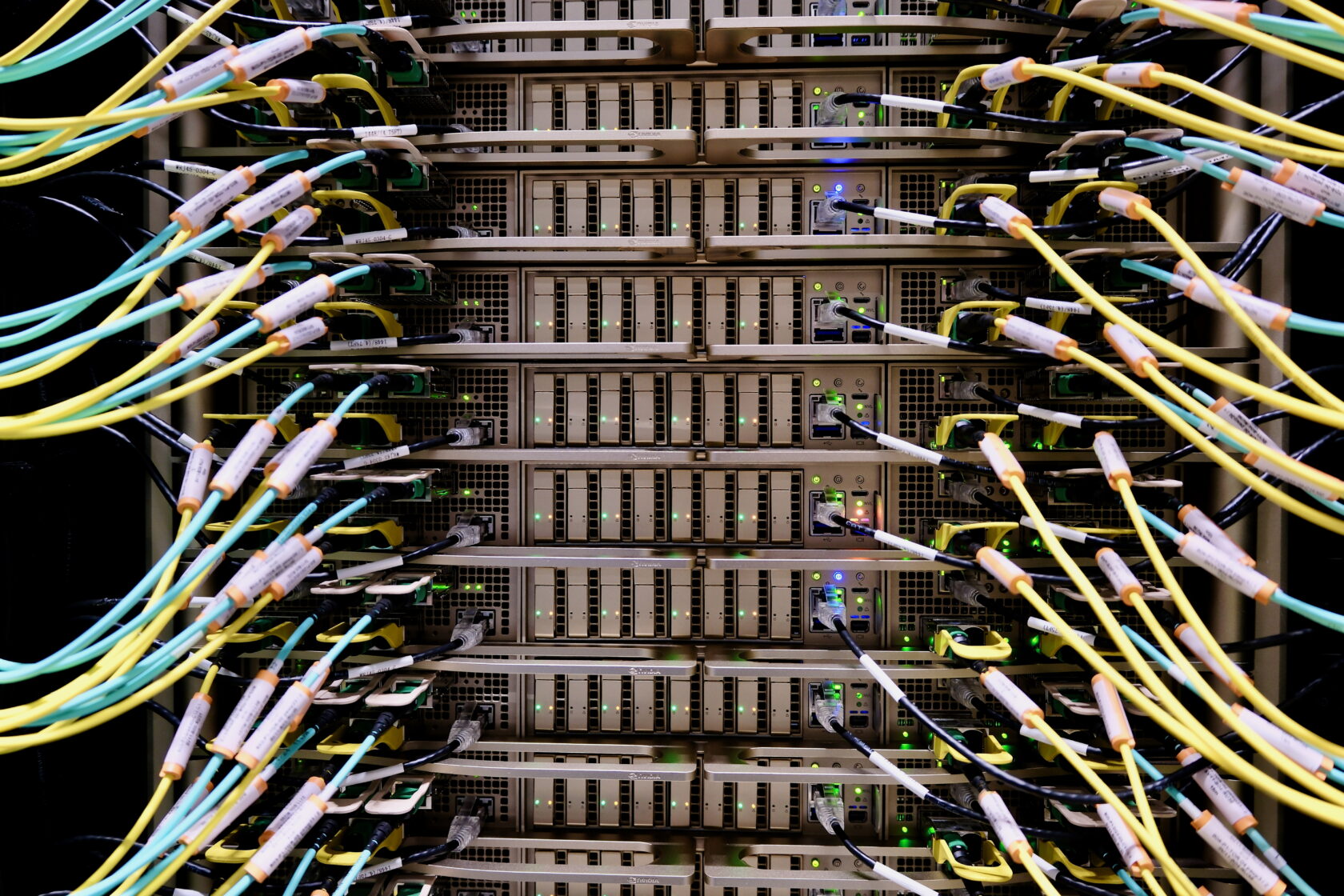

Images and video taken at an Equinix data center in the Silicon Valley.

https://blogs.nvidia.com/blog/blackwell-mlperf-inference/?ncid=so-face-307256&linkId=100000354282489