Stretching Multi Site Clusters to Nearly 1 Million GPUs

Google and NVIDIA have teamed up to provide users with access to as much as one million NVIDIA GPUs to power up the freshly launched A5X instances. The announcement is part of the pair’s latest collaboration to reduce inference costs and improve token throughput. Their A5X system relies on NVIDIA’s network accelerators that enable the development of single and mutli-cluster computing infrastructure for AI workloads.

The A5X Instance: Purpose-Built for Agentic AI Workloads

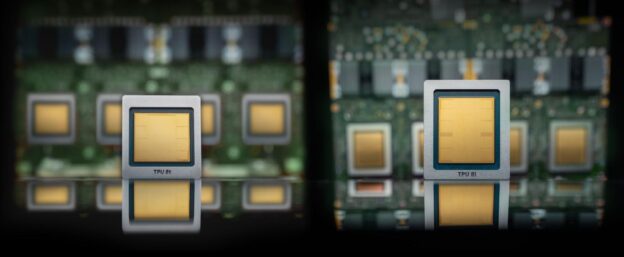

The A5X instances are Google’s latest products that are designed specifically to run agentic artificial intelligence workloads. They are part of Google’s AI Hypercomputer portfolio which also powers the firm’s Gemini platform and its consumer and enterprise AI offerings. As part of its latest announcements, Google announced a slew of upgrades to Hypercomputer which include new virtual machines powered by custom Arm-based CPUs, eight generation tensor processors, native PyTorch TPU support and the A5X instances.

These new capabilities are designed specifically to target agentic AI workloads which rely on a group of AI agents to focus on a piece-wise approach of solving a problem or a task. The A5X instances are the first ones from Google that are designed to work on NVIDIA’s latest Vera Rubin AI GPUs.

Google Virgo & ConnectX-9: Scaling to a Million Vera Rubin GPUs

According to the details, the A5X will use NVIDIA’s ConnectX-9 NICs which are designed to accelerate AI workloads in cloud infrastructure run on ethernet. The NICs, along with Google’s Virgo platform, will allow users to access as much as 80,000 Rubin GPUs in a single cluster and 960,000 GPUs in a multisite cluster.

| Hardware Architecture | Max Single Data Center Cluster | Max Multi-Site Cluster |

| NVIDIA Vera Rubin GPUs | 80,000 | 960,000 |

| Google Custom TPUs | 134,000 | 1,000,000+ |

| Networking Backbone | NVIDIA ConnectX-9 NICs | Google Virgo Platform |

The ROI: 10x Lower Inference Costs & Higher Throughput

Google’s Virgo platform enables it to connect multiple AI chips within a single data center. Along with working with NVIDIA’s Rubin GPUs, it also supports Google’s tensor processing units (TPUs). Virgo can connect as much as 134,000 TPUs in a single data center and more than a million chips across multiple sites. According to NVIDIA, the A5X instance is capable of delivering 10x lower inference costs per token and 10x higher throughput per megawatt compared to the previous generation.

NVIDIA also briefly touches upon physical and industrial AI as it shares that products from firms such as Cadence and Siemens are powered through its infrastructure and available on Google Cloud. The fir adds that Google’s Gemini platform can also deploy agentic models and workflows across industries such as cybersecurity.

https://wccftech.com/nvidias-rubin-lands-inside-googles-virtual-machine-stretching-multi-site-clusters-to-nearly-1-million-gpus/amp/