Many years ago, Sundar Pichai was scuba diving with his family in Hawaii when the weather turned unexpectedly rough. As Pichai entered the water, waves pummeled his lanky frame. He wondered if he should return to safety.

Eventually, he kicked downward. Just a few feet below, Pichai found “the calmest place in the world,” he says, and became possessed by a meditative stillness. “I feel that in any situation, there is a layer which is super calm—in which, if you can get there, you can observe what’s going on,” he says. “And your mind’s energy is focused on what you need to do.”

This anecdote, which Pichai relays in Google’s Mountain View headquarters on a Wednesday afternoon in April, is almost too on the nose. As CEO of Google and Alphabet, Pichai runs the second most valuable company in the world and could earn $692 million himself over the next three years pending Google’s stock performance. But he is practically anonymous compared with other tech CEOs who love making waves: Elon Musk, Mark Zuckerberg, Sam Altman.

Advertisement

Pichai is more sedate. His low-key style has led critics to underestimate him. In late 2022, with the arrival of rival OpenAI’s ChatGPT, they decried Google as bloated and out of touch, with Pichai emblematic of its corporate inertia. “I was under the belief that they just had lost their chops,” says Gene Munster, managing partner at Deepwater Asset Management. “Think about Yahoo! Or eBay. Once you lose that drive for being willing to sacrifice your golden geese for better ways to do anything, it’s almost impossible to come back.”

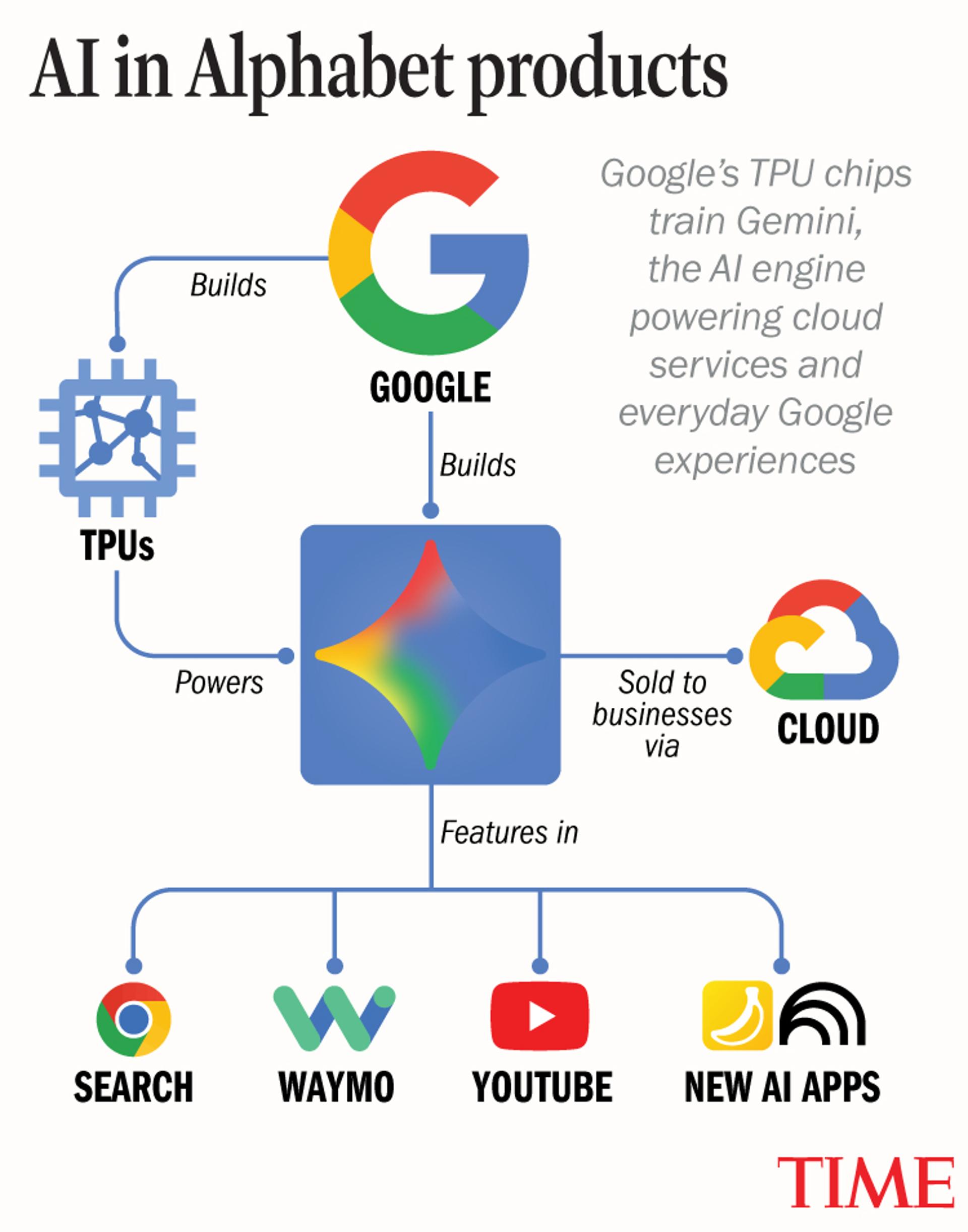

Cue the Jaws soundtrack. While analysts called for his resignation, Pichai remained calm; he had been lying in wait for this moment for a decade. In 2016, he had declared Google would be an “AI-first company,” and began cultivating a series of projects—custom chips, Cloud, YouTube, and deep AI research—that seemed to have nothing to do with Google’s core search product.

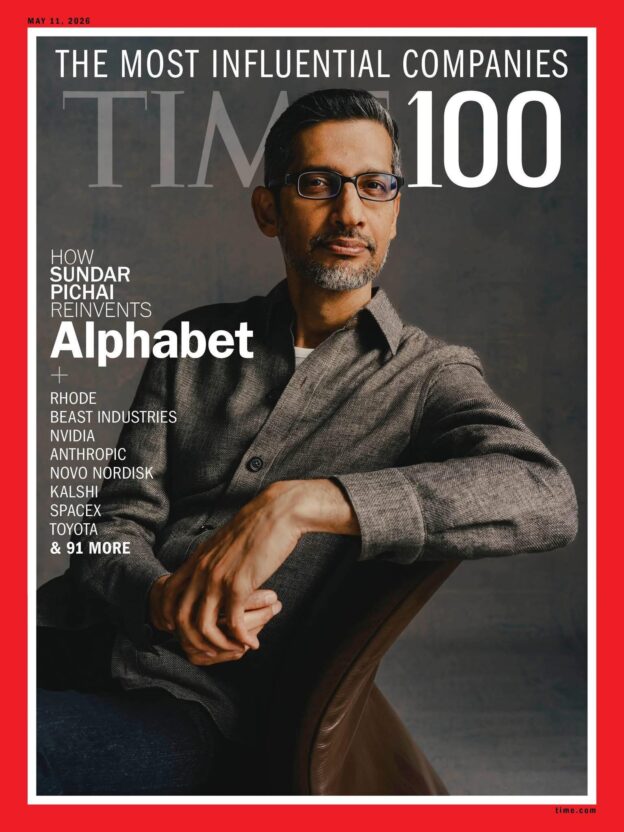

Daniel Dorsa for TIME

All of these bets have paid off, and then some. Google DeepMind, the company’s AI-research lab—led by Nobel Prize–winning CEO Demis Hassabis—forged several key breakthroughs that catapulted Google’s Gemini model to the top of many capability leaderboards. Gemini now accounts for a quarter of AI traffic worldwide, up from 6% a year ago, according to Similarweb. Google has quietly introduced millions of people to AI through everyday products: search, image-generation tools like Nano Banana, video editing on YouTube, research assistance via NotebookLM, translation through Google Translate, and autonomous driving with Waymo. At the same time, its Cloud division has boomed, powering a wave of businesses entering the AI economy. In January, the company hit a $4 trillion market capitalization, becoming only the fourth in history to do so after Nvidia, Apple, and Microsoft.

All these successes mean the main criticism Pichai faces is no longer about his leadership, but rather whether Google has once again become too powerful for society’s good. Equipped with cutting-edge AI, the company is now seen by some as a dystopian Big Brother that turbocharges militaries, hoovers up personal data, and enables mass surveillance. (The company announced a deal with the Pentagon to provide its AI models for classified work this week.) Its algorithmic tweaks can crush business models. And the company’s clout is likely to grow: its massive advantages in funding, infrastructure, and talent mean it may be the behemoth best positioned to win the AI long game, many analysts argue.

Advertisement

So while Pichai prefers to operate out of sight, as the arbiter of how and when billions of people use AI, he is central to humanity’s next chapter. Pichai carries strong values into his work—economic uplift, compassion for migrants—and says his quest is to build useful things for as many people as possible. But his fiercely competitive nature is undeniable too.

“If he wants to do something, you’re not going to be able to stop him. He’s just going to be super nice about it,” says Caesar Sengupta, one of Pichai’s longtime former Google lieutenants. “And then, you cannot move him.”

Some 8,700 miles separate Pichai’s current home in Silicon Valley from his birthplace. He was raised in Chennai, a southern Indian metropolis, in a modest house; he and his brother slept on the living-room floor. Growing up, his family progressively gained a series of transformative technologies: a water heater, a refrigerator, a rotary telephone. When the phone arrived, so too did his neighbors, flocking to his house to call their families or the hospital for medical records.

But the rollouts were agonizingly slow. It took five years to get off a government wait list to secure a telephone. For Pichai, it was an early example of why technology should sometimes ship faster than the speed of bureaucracy. “I do feel a sense of urgency around it all,” he says.

Pichai arrived in the U.S. in 1993, where he earned master’s degrees in science and business from Stanford and Wharton before joining Google in 2004 in product management—the same month Gmail launched. He quickly rose through the ranks because of his ambition, work ethic, and instinct for what users wanted, his collaborators say.

In Silicon Valley, there are a handful of archetypal leaders, like Steve Jobs as the visionary or Zuckerberg as the engineer. Pichai is the ultimate product leader, those collaborators say. He was the driving force behind Chrome and Drive, even when senior leaders doubted those projects’ viability. “He has this ability to fully simulate a product in his head, and how it will be received and used by end customers,” says Clay Bavor, a former Google executive who worked directly under Pichai for a decade. “He has an exceptional sense for craft and the details in a product, all the way down to the pixels on the screen, the sound of a voice, the tactile feedback.”

When Pichai took over as CEO in 2015 from co-founder Larry Page, he had a vision in mind. Most people still treated thinking machines as sci-fi fantasy. So it caught other leaders in the company off guard when, in 2016, Pichai announced that Google would be shifting its priority from mobile-first to AI-first experiences. “This paves the future for Google for the next 10 years or so,” he declared in an internal meeting that year.

Not everyone was convinced. “For many of us, it was like: Wait, are we ready? People have been talking about AI for 40 years,” says the former Google executive Sengupta, who remembers Pichai fielding pointed questions in Google’s Exec Circle, where the company’s VPs have candid discussions.

But Pichai had been spending time with the company’s AI leaders, like Hassabis—whose startup DeepMind was acquired by Google in 2014—and Jeff Dean, each of whom were presiding over sporadic but key breakthroughs. Pichai was particularly blown away when DeepMind’s AI system AlphaGo played a brilliant, novel move in the strategy board game Go against champion Lee Sedol in 2016, revealing that AI could think creatively and beyond mere mimicry. “I understood the potential of the technology to make sharp jumps,” Pichai says.

Of course, being a product manager at heart, Pichai wasn’t just interested in AI in the abstract. He wanted this nascent technology to supercharge the next generation of Google products. At the time, AI was still primitive. “We were joking that they couldn’t tell the difference between a chihuahua’s face and a blueberry muffin,” says Bavor.

Pichai pushed to implement it anyway. He drove AI recognition into Google Photos and personally tailored his keynote at the company’s annual I/O developer conference to cover how the future of search would be visual, spearheading Google Lens. Then he doubled down on other AI bets: custom silicon chips known as TPUs to train new models and self-driving cars through Waymo, each requiring huge investment with little hope of near-term revenue.

Advertisement

Perhaps the most pivotal decision of Pichai’s career was his conviction about the internal importance of DeepMind. After the success of AlphaGo, Hassabis wanted to spin DeepMind out of Google, into an independent company that prioritized safety over profits. Other Google executives, including co-founder Page, were fine with this proposed arrangement, journalist Sebastian Mallaby reported in The Infinity Machine, a new book on Hassabis.

But while Hassabis pushed for multiple years for DeepMind’s independence, Pichai ultimately rebuffed the effort. He needed DeepMind not only to advance science independently, but also to help him integrate AI into Google’s products. “I felt a strong conviction that we had to be one team to make sure we are making progress here,” Pichai says. “It was so central to the company, I couldn’t envision it in my head, intellectually, to think about it any other way.”

Before late 2022, AI chatbots were mostly a punch line. One 2016 effort from Microsoft, Tay, had been pulled from the web after producing racist and sexist messages. Google had been building chatbots internally, but the reputational risk of releasing them was too high, especially because the company was facing antitrust concerns.

Google had also made the lion’s share of its money on search advertisement—in 2023, the category accounted for 55% of its revenue. Plunging into AI search threatened to decimate that business. “There were questions internally about: We have some new tech, but is it really ready? Will it hurt users’ trust?” says Liz Reid, Google’s VP of search. “Then the tech advanced and the world changed, and the risk factors changed.”

OpenAI’s large language model, built atop a neural-network concept developed a few years before by Google researchers called the transformer, gained millions of global users within days, asking it for medical and relationship advice, pitch decks and poetry. The new tool posed a direct challenge to Google’s search dominance, especially when Open AI announced a partnership to integrate into Microsoft’s Bing.

Advertisement

Read More: The AI Arms Race is Changing Everything

“The main signal I took away from that moment was that wow, the Overton window has shifted,” says Pichai, referring to the concept of how something previously considered radical can become mainstream. “The technology is still imperfect, but people are more ready for it than we fully internalized.”

Pichai was ready to pivot. His investment in TPUs put Google in position to massively build out its AI data-center infrastructure while partially avoiding the so-called Nvidia tax—the premium companies pay for the chips that power AI. And Google was sitting on a ton of data to train on—thanks to its search index and YouTube—as well as a mountain of cash from its overflowing profits.

Pichai had also cultivated a deep bench of talent. He decided it was time to harness DeepMind and place it at the center of the company by combining it with Google’s other cutting-edge AI-research team, Brain. “The call to motivation was: ‘This is your life’s work, and you have a chance to go express it and put it in front of billions of people,’” he says.

The transition was intense, with many employees expected to work nights and weekends. Mindset shifts were required. DeepMind researchers could no longer operate in the abstract: “Now the technology development is completely coupled with the product itself,” says Koray Kavukcuoglu, CTO of Google DeepMind. And teams were forced to be more collaborative. “People realize if you all pull together, you can quickly make a difference,” says Reid, the VP of search. “And that gives people a kind of high.”

The early results were rocky. When Google rushed out a rival chatbot in early 2023, it falsely claimed that the Webb telescope took the first picture of a planet beyond our solar system, causing Alphabet’s market value to plummet $100 billion in a day. A year later, when Google released AI Overviews in search, it told a user to eat one small rock a day.

Despite the ridicule, Pichai wasn’t concerned. “Even when we made mistakes, I could see that we were doing many right things,” he says. “My job was to make sure we are going to be set up well a year out.”

Advertisement

And he had been in this situation before. “When we built Chrome, we had 1% market share one year after we launched,” he says. In fact, if you look at Google’s history, it has virtually never been first to a new tech product, whether it be web browser, search, mail, or maps. But its distribution channels, resources, and talent allowed it to close gaps fast.

Sergey Brin, the company’s co-founder, returned in 2023 to work on day-to-day technical model improvements. The newly united Google AI team, led by Hassabis, made crucial breakthroughs—including the scaling of pretraining, in which the AI model is fed vastly more data, and the honing of chain-of-thought reasoning, in which LLMs take longer to think about their answers—leading to better results.

Lon Tweeten for TIME

Their own AI tools have assisted in the effort as well, employees say. Google engineers widely use Gemini Code Assist to improve Gemini itself. And Pichai asks Gemini for conversational advice before he meets with other CEOs. Sometimes, Gemini will return a “superficial answer,” Pichai says, to which he responds: “Tell me something that could really be on his or her mind.” “And I get really insightful things which makes for a more human connection, because that’s actually what they are worried about,” he says.

Over the past two years, Google’s progress has been relentless. Gemini 2.5, released in March 2025, and Gemini 3, in November, sailed past its chatbot competitors in key benchmarks. (The leaderboards are constantly in flux as companies ship ever more powerful models.) Over 2 billion users engage with Google’s AI-enhanced search features monthly, and the overviews no longer tell anyone to eat rocks. Search revenue grew 17% year-over-year in the fourth quarter of 2025, quieting concerns over AI’s cannibalizing Google’s core business, and the company crossed $400 billion in annual revenue for the first time.

AI has been integrated into Google Search, Gmail, Calendar, Maps, Docs, and Photos, meaning that people who aren’t even seeking out AI are now engaging with it. No other company delivers AI to so many people in so many places. The analyst Munster argues that this may matter more than the technology. “People need to have something 10 times better to really switch behavior,” he says. “And they have something that’s actually really pretty good, but it doesn’t need to be the best.”

Advertisement

Google’s newer AI products have taken off as well. Millions of users have turned to research tool NotebookLM to synthesize information. Waymo’s self-driving cars have achieved mundanity on the streets of cities like Austin and Los Angeles, with London their next stop. YouTube has transformed from a money pit into a subscriber behemoth and legitimate television replacement earning over $60 billion a year. Neal Mohan, the CEO of YouTube, says that Pichai has played a crucial role in YouTube’s growth, including in its development of new AI production tools for creators. “His insights foreshadow these huge trends, but they’re also very, very precise,” he says.

Read More: Neal Mohan Is TIME’s 2025 CEO of the Year

In San Francisco, the developer Jose Portilla is building a startup that uses AI tools to create personalized picture books and TV shows for kids. Portilla uses Nano Banana to generate images, and Gemini to build stories and voice the characters.

“They have the best image model right now, and there’s no one close,” he says. “I always tell people Google will be the main winner in these AI wars. They have the capital, they have the data. They just needed somebody to push them and wake them up.”

Pichai’s lieutenants hold up his resolute focus on serving the user as an unequivocal good. But the approach has its costs. Optimizing for user behavior invites externalities: if workplaces can deliver goods more cheaply with AI, they may deem workers expendable. If news readers can get the information they seek from AI overviews, they might stop clicking through to websites, decimating publisher traffic.

There’s also a thin line between a product that people love and one they can’t escape. In March, a California jury found Meta and YouTube liable for harming a young user through addictive design features that contributed to her mental-health distress; YouTube was ordered to pay $1.8 million. Similar dynamics have made AI systems dangerously sycophantic. In October, a man who had developed a relationship with Gemini died by suicide after Gemini promised him an eternity together; the man’s estate sued Google. “This shows that safety is, at best, a second thought for them,” said Jay Edelson, the plaintiff’s lawyer.

Advertisement

When the lawsuit was filed, a Google representative told TIME that Gemini was “not perfect” but generally performed well and had referred the man to a crisis hotline “many times.” A month later, the company rolled out new support tools, including directing Gemini users who express thoughts about suicide or self-harm to crisis-hotline resources.

“Areas like mental health are profoundly important,” Pichai says. “We are going to be very receptive to feedback we are getting as we engage.”

The calculus gets even murkier when the user is a military. While DeepMind stipulated in its original agreement with Google that its tools could not be used for weapons or surveillance, that language has since been removed, and Google has worked with government agencies, like U.S. Immigration and Customs Enforcement (ICE), and the Israeli government. While Google has maintained that the Israel contract was not for “military workloads,” internal documents show that the company fretted over its inability to control how Israel used their technology.

This year, Google has faced internal blowback not seen at the company since the first Trump Administration, when Pichai himself participated in an employee protest against the President’s immigration policies. In February, more than a thousand Google workers signed an open letter demanding the company end its partnerships with DHS and ICE. An additional 100 DeepMind employees signed a letter asking Jeff Dean, Google’s chief scientist, for “red lines” around the usage of Gemini by the Pentagon for surveilling American citizens or piloting autonomous weapons without a human in the loop. (Google has 190,000 employees; DeepMind has around 6,000.)

Sundar Pichai, CEO, Google speaks onstage during Google Cloud Next “25: AI Exclusive at the Sphere” on April 08, 2025 in Las Vegas, Nevada. Candice Ward—Getty Images for Google Cloud

One of the employees who signed the letter was engineer Alex Samburov. “Google’s products are used for violent purposes domestically and abroad,” he wrote in an email to TIME. “I don’t want to work for a digital weapons manufacturer, and many of my colleagues are against this drift towards militarization. But they are afraid to speak out due to the justified fear of retaliation.” (Google fired 28 employees who staged a sit-in against the company’s contracts with Israel in 2024.)

Advertisement

Pichai says that “all of us, including the government, are aligned on humans in the loop, and the technology not being used for mass surveillance in a way that contradicts human rights.” Asked to respond to Samburov, he says: “I think it’s a very nuanced issue. We all have a role responsibly, to invest in the national security of democracies around the world … I think we’ve long, more than any other company in the world, had a culture where employees speak up.”

(Three weeks later, Google announced a deal with the Pentagon, who will now use Google’s AI models for “classified work.” The decision was met with another wave of criticism from employees, including one Google DeepMind research scientist who called it “shameful” on X.)

When pressed about these challenges, Pichai’s answer is uniform: the technology needs to be rolled out first gradually, and then modified by companies and governments based on real-world feedback. This approach turns users into guinea pigs. But Pichai is convinced that it is better than the alternative—especially as AI tools become increasingly powerful and disruptive. He cites Waymo as an example of a seemingly dangerous AI project that Google has rolled out slowly and safely. “The last thing you want to do is to not use it, not see any of these behaviors, and then just have a powerful model and get surprised,” he says. “So I think it’s important we are working through these things.”

Read More: Waymo’s Self-Driving Future Is Here

Skeptics of Pichai’s ambitions might consider his record. Over the past decade, he foresaw the rise of video-content creators, self-driving cars, and mainstream AI tools, despite being told they were a distraction. Now Google controls every level of the AI stack: research, chips, cloud, software, hardware. “Among the existing public companies, they’re the best positioned, because they have more pieces than anybody,” says Munster. The company also has a massive amount of cash, announcing in February that it could double its spending on capital expenditures this year to over $175 billion.

Pichai is already looking toward new frontiers. On Google’s campus, excited employees walk me through a set of demos for drone delivery through Wing, hologram-like video calls via Beam, and AI-powered glasses. Together with Google’s many other Gemini products, they trace the outline of a single animating idea: a personalized AI that knows you better than anyone. The idea unnerves critics but thrills its architects. “We talk about it as a kind of universal assistant that would be on your phone, your laptop, your TV screen, your watch, your glasses,” says Josh Woodward, who leads Google Labs and the Gemini app.

Further out on Pichai’s horizon are goals like bringing humanoid robots into every home; launching data centers into space; and accelerating quantum computing, which could lead to breakthroughs in cancer treatment and climate modeling. It’s easy to dismiss all that as sci-fi corporate hype. But people said that about Pichai’s 2016 AI declaration. A decade later, he remains convinced that if his company focuses on the users, everything else will click into place—no matter what washes up in the wake.

“I have a lot of trust in people and their ability to use and adapt to technology,” he says. “We will need frameworks unlike we’ve ever had before. But I expect humanity to rise to the moment.”

Stylist: Courtney Mays; Set Design: Pakayla Rae and Alex Welsh

https://time.com/collection/time100-most-influential-companies/2026/saudi-aramco/